New to Chatbots? Understand Your Security Risk

With the increasing use of chatbots as a frontline tool for businesses, organizations need to take a closer look at the security of such services and include them in their threat model.

Chatbots are easily the best internet phenomena since “We use cookies” popups. Powered by the likes of Drift, Freshworks, Bold360 and others, these tools help companies service more customers more quickly, at a time of growing consumer impatience when every second counts. Despite the occasional annoyance, the fact is they're highly effective and efficient tools for many sales and support teams around the globe.

Like any new technology, chatbots can also pose a potential security gap if vendors and organizations aren’t aligned in their deployment. At Tenable, we worked closely with our vendor to map any potential issues and ensure a secure user experience across our website. Organizations can get ahead of potential threats by understanding the security risks around these new tools and following common best practices.

How do Chatbots work?

Today’s chatbots serve a variety of purposes. They’re great at:

- Generating sales leads

- Answering common support questions

- Redirecting site visitors to other resources or contacts

That said, these bots can't do everything, so at some point, a human has to get involved. Many interactions can be handled directly through the chat service provided by the bot, such as a support person walking a customer through some common troubleshooting steps. In more complex situations, however, these bots will often schedule meetings or send messages to be handled outside of the chat session. For example, if a prospective customer wants to chat with a salesperson about terms for a potential deal, they'll have a quick back and forth with the bot, which will result in a meeting with a salesperson being scheduled. How does this work? Well, this is where the security gap comes in.

Security Impact

Companies are finding more ways to integrate these bots into their existing business models. These bot services have functionality built-in that gives them permission to schedule meetings and send messages as an individual from a given pool of users. These meeting invites and emails will appear to come directly from these individuals and not from a third party service. In some cases, these chatbots may need to collect personally identifiable information (PII) or payment information. This creates additional risk and raises several security concerns around data collected.

Let’s first consider the impacts of collecting PII. Organizations need to be cautious in understanding how the chatbot platform integrates with their business. Understanding if the chatbot requires privileged access to backend systems for authentication or account authorization is a major security concern. Additionally, one has to consider if the traffic is encrypted, how information is stored and equally important and how the chats are logged. If an attacker were able to identify a vulnerability in a chatbot application, this could open an opportunity to access privileged and possibly sensitive data. If the data collected is only stored with the chatbot service provider, there’s the added risk of not being able to control how that data is secured or stored, both in transit and at rest. In 2018, Sears and Delta suffered a breach of payment data when a third party chatbot service they utilized was compromised.

Additional concerns arise in cases where the chatbot is used as a scheduling mechanism. In a best-case scenario, this causes nothing more than minor annoyance and embarrassment. In others, the consequences could be much more severe. An attacker could launch advanced social engineering attacks by essentially sending messages as a trusted insider for the company using the chatbot service.

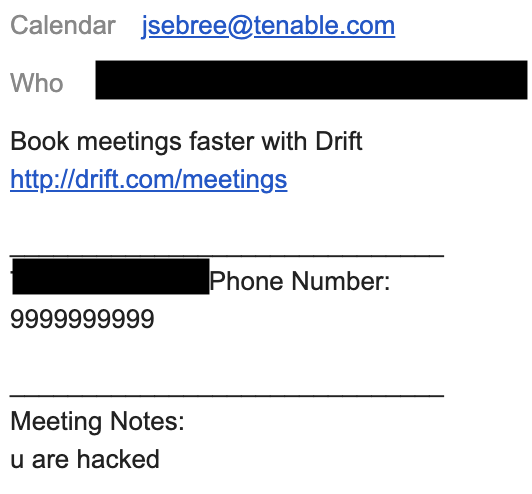

As an example of an attack Tenable has previously observed in the wild, let’s say your company uses a chatbot service on their site to generate sales leads. If an attacker happens to know or guess the email addressing scheme or internal mailing lists the company uses (such as [email protected]), they could send messages on behalf of a salesperson to anyone within the organization. In the example below, the meeting notes section of a calendar invite could include any message an attacker wanted, even one containing malicious links. This email appears as a trusted source and is not marked as coming from the outside world -- effectively bypassing all existing email protections, such as DomainKeys Identified Mail (DKIM). As the author appears to be a trusted source, employees would be more likely to follow the malicious link in the invite.

While this example is not a major breach of security, it does demonstrate how one of these chatbot services can be abused. What happens if the attacker starts filing IT requests on behalf of a salesperson? A sophisticated attacker with malicious intent could wreak havoc by abusing the functionality provided by the bot, perhaps by requesting ports to be opened on the company firewall or applications/services to be installed.

Solutions and best practices

As chatbot use and scope of services continues to expand, the following solutions and best practices can help increase security and reduce an organization's risk:

- Authentication: In scenarios where your chatbot needs to authenticate a user in order to provide specific solutions or fulfill requests, it is imperative to consider how the user will authenticate and how the chatbot system will handle these requests. Using two-factor authentication (2FA) adds an additional layer of security and if using a third-party chatbot service, single sign-on (SSO) solutions may be available and should be utilized. Additional consideration around authentication should include forcing timeouts after a set time period.

- Encryption: While end-to-end encryption might seem like overkill for basic support questions, encrypting all traffic could help protect vulnerable users. Consider a use-case where a customer using public WiFi enters their account information and password in their query to the chatbot without being solicited for this information.

- Logging: Careful consideration must be made in what data is collected and how this data is stored. There may be international laws to consider based on a user's geographic location, but also consider the above example, where a user inputs their username and password into the chat. One solution might be to delete all chat data once the session is complete or closed due to a time out condition. Other solutions might include matching specific keywords to scrub out any sensitive data from logs.

The easy solution in this scenario would be to simply blocklist or deny your own company from being able to receive these messages, which is not a default behavior or configuration for many of these services. This mitigation doesn't, however, prevent an attacker from sending these unsolicited messages to third-parties. Other solutions could include forcing these messages to come from a separate, designated domain and ensuring that no internal processes rely strictly on email. These solutions would allow more flexible monitoring and filtering.

Conclusion

The risks involved with chatbot attacks are likely to be more of an annoyance than anything else. Depending on a company’s configuration, sophisticated social engineering attacks could definitely occur, but to our knowledge at the time of this writing, social engineering hasn't been the sole cause of any major breaches. Despite the current risk profile for chatbots, it's still something security organizations within companies should be paying closer attention to and monitoring. It certainly isn't the type of attack we see everyday, and chatbots are a pretty innocuous piece of software that most people don't realize wields this sort of power. However, we believe it’s important to take a closer look at the chatbots your organizations utilize and make sure it's included in your threat models.

Get more information

See vulnerabilities reported to vendors from Tenable's Research Teams on the Tenable Research Advisories page.

Learn more about Tenable, the first Cyber Exposure platform for holistic management of your modern attack surface.

Get a free 30-day trial of Tenable.io Vulnerability Management.

Learn more

- Vulnerability Management

Tenable One

Request a demo

The world’s leading AI-powered exposure management platform.

Thank You

Thank you for your interest in Tenable One.

A representative will be in touch soon.

Form ID: 7469

Form Name: one-eval

Form Class: c-form form-panel__global-form c-form--mkto js-mkto-no-css js-form-hanging-label c-form--hide-comments

Form Wrapper ID: one-eval-form-wrapper

Confirmation Class: one-eval-confirmform-modal

Simulate Success