The Move Beyond Point Scanning

A few weeks back, I was visiting with a customer to update them on current release information and roadmap features. A lot of the discussion revolved around their current vulnerability management process, and potential improvement areas based on SecurityCenter Continuous View. We talked about how many people they had using SecurityCenter and how they utilized the analytics and dashboards, but it turned out there were only a handful doing this, and they were mainly using SC to automate their weekly scanning, report creation, and distribution process. This is not uncommon, and SC can certainly streamline most manual cycles. However, when I mentioned they really needed to look at moving beyond scanning and reporting, it caught them by surprise and they gave me a puzzled reply of "isn't that what Tenable does for us?" It absolutely is, but there's much more to it.

Scan to Monitor

First, at the vulnerability scanning level, there is a need to move beyond “point scanning”, which is the traditional vulnerability assessment process of “scan, report, repeat”. This wasn't a designed process; rather, as vulnerability scanning came into being, this type of process settled into place. Scanning gives good insight into vulnerabilities at a point in time, the vulnerabilities are addressed by sending and reacting to reports, and then we scan again. The problem is that the point scanning approach to vulnerability management is being stressed by new threats and technologies.

Mobile devices and virtualization don’t follow the same deployment and decommissioning procedures that are followed with static data centers. Thumb drives aren’t the same problem they once were, but this is mainly because they’ve been replaced by cloud file-sharing services that install and bypass perimeter controls in seconds. Attackers aren’t targeting perimeters when they can get the job done through harvesting social networks for phishing targets. All of this, combined with an accelerated pace of network change, means you can’t expect the modern attack surface to be at rest long enough to scan.

There are many efforts to collapse scan cycles, perhaps even moving to “continuous scanning”, but even that can’t keep up with a constantly changing landscape. Scanning works very well for analyzing state, but deeper risk information is available in network traffic, third-party management solutions, and logs. A distributed architecture of scanners, sniffers, and log sensors is needed to eliminate scan cycles and monitor the full spectrum of current cyber risk in continuous motion. SecurityCenter Continuous View provides the Passive Vulnerability Scanner (PVS) and Log Correlation Engine (LCE) to bring these sources into the fold for true continuous monitoring.

Report to Engage

Second, the business process in point scanning is all about pushing reports around. This is manual, and often messy. I quite often walk into organizations that are emailing around spreadsheets with scan findings. These become difficult to maintain, can become stale, and are sometimes dangerously inaccurate, with multiple versions in circulation leading to complacent reaction to scan results. I've also seen many customers who have gone down the route of building their own databases and consoles for report routing. This can be expensive, hard to maintain, and ultimately ineffective for risk reduction. Naturally, a lot of people ask us how we can automate report routing. SecurityCenter certainly provides full reporting automation, but it's really designed to move you beyond pushing reports around regularly.

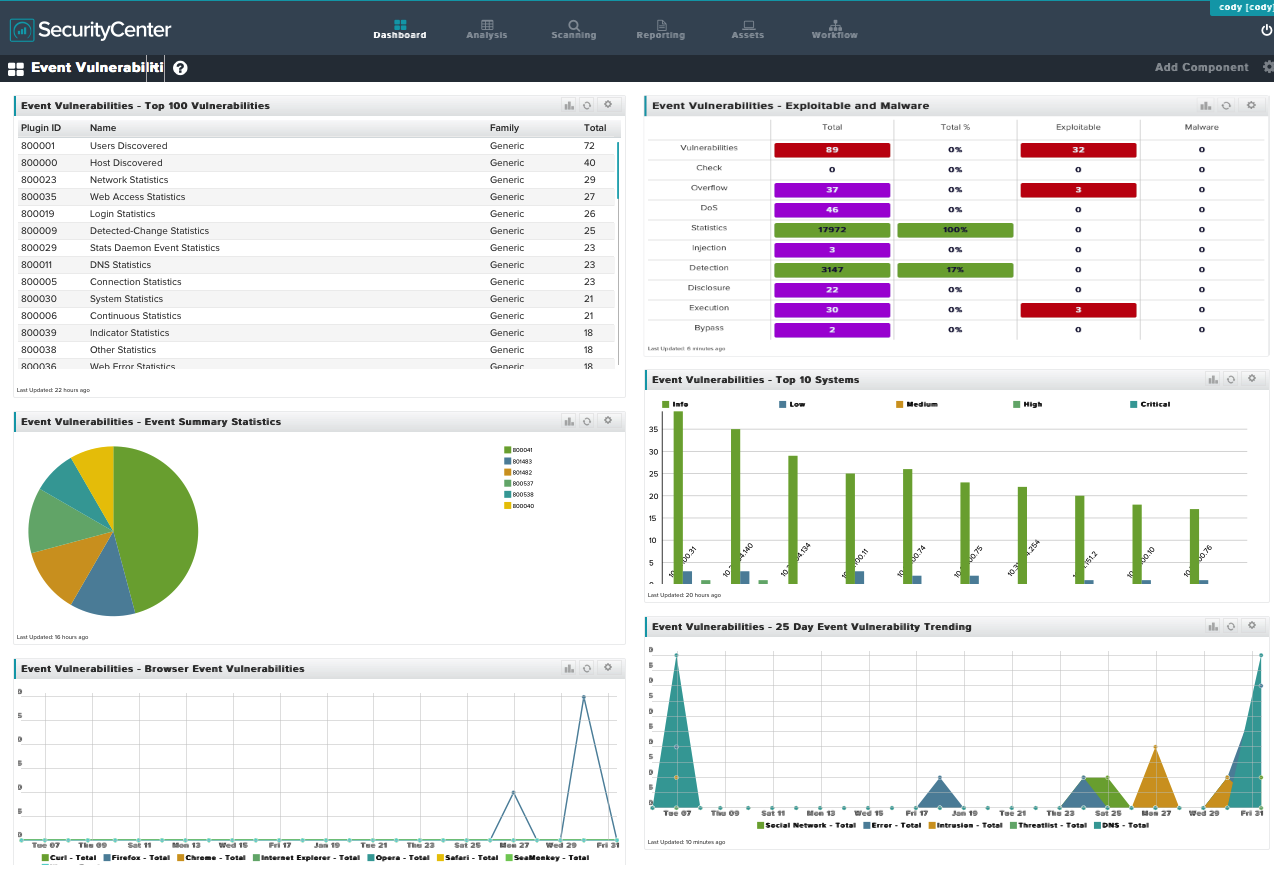

SecurityCenter inverts the report routing model. Users throughout an organization don’t wait for scan reports; instead, they engage directly with timely and current data. With analytics and filtering, they also gain a level of precision and context that is unavailable in static reports. In the same time it takes them to open and view that type of report, they could simply run queries in SecurityCenter and view the same level of detail in their browser. That same pie chart in the static report becomes a clickable drill-down in a SC dashboard, and then they can start to ask their own questions. When was this discovered? Is it exploitable? Is it exposed externally? You don’t have to anticipate these questions in a report when people are engaged at the console.

While prioritizing vulnerabilities within contextual information is important, customers often want to go much further, and they can do that when we add logging and sniffing alongside scan results. With the right users engaged on these risk feeds, we can add more data, sometimes moving beyond the vulnerability cycle and into the attack cycle. Wouldn't the same person charged with applying critical patches on a system be interested in seeing suspicious processes, a spike in failed login attempts, or a desktop accepting web requests? This wouldn't work if it showed up in a 100-page report that was 5 days old, but with an engaged user population, you can move from scan reporting to distributed incident response.

As a practical example, I recently talked to another customer who was asking how to ignore scan findings on anonymous FTP servers that they needed for production uses. My first reaction was to point out the “accept risk” functionality within SC, which could suppress these findings from being included in future reports. However, we don’t really want to just ignore these, we want to make sure they aren’t being abused. So we did accept the risk, but we also set up a query on network traffic (using PVS) and the FTP logs ( using LCE) to be reviewed regularly and alert on abuse. In this case, it’s not a vulnerability… until it is because someone is attacking it. Does this fall under vulnerability management? I don’t think attackers and malware really care.

Repeat to Measure

Finally, with point scanning, differential reporting is the primary way to tell what has changed. You run a scan today, compare it to last month's scan, and find out what's new, old, and closed. While these differentials are useful, they aren't very insightful over the long run. You can move the comparison point back a few months or a year, but then you lose everything captured in between. There are also many other security solutions that have deep dependencies on vulnerability scan results, but when they pull point-in-time scan results, they lose all the continuity, context, and fidelity that is available through SecurityCenter. What people are really after is not the differences between point scans. They want measurements of how effective their efforts are at reducing risk. What management and the boardroom really wants to know is “are we getting better or are we getting worse in our security posture?”

Continuous metrics need to map risk data within the context of security and compliance, and show the outcome of engagement on these feeds. That’s why Tenable has expanded its research to include a team that focuses on bringing a rich source of scanning, sniffing, and log data to the surface with visual indicators, charts, and trending. The “scan, sniff, and log” approach is universal and applies across industries, but different data has different meaning and value, which leads to many regulatory control frameworks and security standards. Because of this, Tenable’s team extends these visuals into different security and compliance views to better relate that data to risk stakeholders across industries and up and down organizational charts. Compliance-focused audiences want to see changes to baselines, patch cycle efficiencies, and control auditing. Response-focused audiences want anomaly detection, indicators of compromise, and events. SecurityCenter Continuous View provides all of these views and more.

The ability to repeat the scan/report process goes away in a continuous model. Engagement by the right people in a continuous flow of risk information, and measurement of progress toward risk reduction, becomes the new model. Vulnerability management was once “scan, report, repeat”, but it’s now “monitor, engage, measure”. SecurityCenter Continuous View comes equipped with the sensors and platform to enable this new continuous approach at scale. If you don’t think this level of continuous engagement is needed, there’s malware dwelling out there to prove you wrong.

Related Articles

- Vulnerability Management

- Vulnerability Scanning